Adoption is table stakes. Adaptation is the real engineering challenge.

Code generation with AI is a solved problem. Not perfect, not without nuance, but solved in the way that matters: a competent engineer with a modern coding assistant can produce working, production-quality code faster than ever before. The tooling will keep improving. The models will keep getting better. That race is being run, and it’s being run well.

But here’s what I keep seeing across engineering organizations: the conversation is stuck on adoption. Which model? Which assistant? Which agent framework? These are important questions, but they’re the wrong bottleneck. The harder, more consequential challenge isn’t AI adoption — it’s AI adaptation.

The distinction matters. AI adoption is acquiring and deploying generalized AI tools, models, and platforms. Most organizations are doing this aggressively. New assistants get evaluated monthly. Teams experiment with the latest frontier models. Engineering leaders allocate budgets for AI tooling. This is table stakes.

AI adaptation is something else entirely. It’s contextualizing those generalized capabilities for your organization’s specific development lifecycle — redefining stages, roles, responsibilities, and ownership so that AI isn’t just sprinkled on top of existing processes but fundamentally integrated into how you build software. Almost nobody is doing this deliberately.

And in the absence of deliberate adaptation, something familiar is emerging: Shadow AI.

Just as Shadow IT appeared when employees adopted cloud tools and SaaS products without IT governance, Shadow AI is spreading through engineering organizations. Developers use different coding assistants with different configurations. Teams build their own prompt libraries, their own workflows, their own automation scripts. Some teams use spec-driven approaches; others vibe code everything. Everyone is experimenting. Nobody is consolidating. There’s no shared vocabulary for what’s working, no mechanism to evaluate practices across teams, and no organizational learning from the collective experimentation.

The result is what I described in Beyond Code Completion: organizations sprinkling the “GenAI magic dust” all over their existing processes, getting percentage improvements instead of the transformational gains that AI-native approaches can deliver.

Most organizations are letting adaptation happen organically. That’s a trap.

The Bottom-Up Trap Link to heading

The tempting approach is to let things grow naturally. Give teams freedom to experiment. Let a thousand flowers bloom. The innovators will figure it out, and the best practices will rise to the top.

This feels right. Innovation is inherently messy. Early-stage experimentation needs room to breathe. Top-down mandates have a well-earned reputation for killing creativity. And there’s genuine wisdom in letting practitioners closest to the work discover what works for them.

But here’s where it breaks down. Decentralized experimentation without a structure to consolidate learning creates a specific set of problems that become visible only at organizational scale.

Unmanageable repetition. Multiple teams solve the same problems independently. One team builds a spec-driven workflow for their React frontend. Another team builds a different spec-driven workflow for their React frontend. A third team doesn’t know either exists and starts from scratch. The solutions are incompatible, the lessons aren’t shared, and the organization pays the cost of solving the same problem three times.

Over-contextual optimization. Practices get tuned for one individual’s workflow, one team’s tech stack, one project’s constraints. A developer builds an elaborate prompting strategy that works brilliantly for their specific codebase and their specific mental model. But when another developer tries to adopt it, it falls apart — too many implicit assumptions, too much context baked in. The practice works locally but breaks when transplanted.

Overlap and reinvention. Innovators across the organization strive for local maximums with no formal, generalized way to evaluate the metrics of their practices and technical artifacts for wider scaling. There’s no shared framework for asking: “Is this approach better than what Team B is doing? How would we even compare?” Without that framework, every team optimizes in isolation.

No shared vocabulary. When everyone measures differently — or doesn’t measure at all — it’s impossible to compare what’s working across teams. One team talks about “velocity gains.” Another talks about “quality improvements.” A third measures “lines of code generated.” These aren’t comparable metrics, and without comparability, there’s no organizational learning.

The result is pockets of excellence surrounded by organizational confusion. Individual teams may be thriving, but the organization as a whole isn’t compounding those gains. As I’ve argued before, tools benefit individuals, processes help teams, and culture elevates organizations. Bottom-up experimentation optimizes the first level and leaves the other two to chance.

Structure Before Scale Link to heading

If pure bottom-up doesn’t work, does that mean we need top-down mandates? Not exactly. But we do need a centralized beginning.

A top-down starting point introduces structure and problem statement definition. Outcomes need to be established with higher-level visibility so that processes can be reinvented with a wider audience in mind. This isn’t about dictating how every team works. It’s about defining the boundaries within which experimentation happens — and creating the mechanisms to capture what’s learned.

Here’s where a principle from software engineering applies directly to organizational design: closed for modification, open for extension.

We use this principle every day in code. A well-designed framework has a stable core architecture that consumers don’t modify. Instead, they extend it — building plugins, implementing interfaces, customizing behavior within defined boundaries. The core provides consistency and interoperability. The extensions provide flexibility and specialization.

Why wouldn’t we apply the same thinking to our engineering practices?

The core methodology — the strategic process that defines how teams move from intent to production — should be stable. Not rigid, not immutable, but stable enough that teams across the organization share a common language, common decision gates, and common quality expectations. This is the part that’s closed for modification.

The team-level practices — the specific workflows, tool integrations, and domain-specific techniques — should be open for extension. Teams customize within the framework’s boundaries. They build tactical skills that fit their stack, their domain, their constraints. But they do so within a structure that makes those skills discoverable, comparable, and shareable.

This is how you get hyper-customization without chaos. Not one-size-fits-all, but a shared architecture that teams extend. The same way modular specification architectures enable composable, scalable design documents, a layered approach to AI adaptation enables composable, scalable engineering practices.

The goal isn’t to eliminate experimentation. It’s to give experimentation a home — a structure that captures its outputs and makes them available to the wider organization.

The Three Layers of AI Adaptation Link to heading

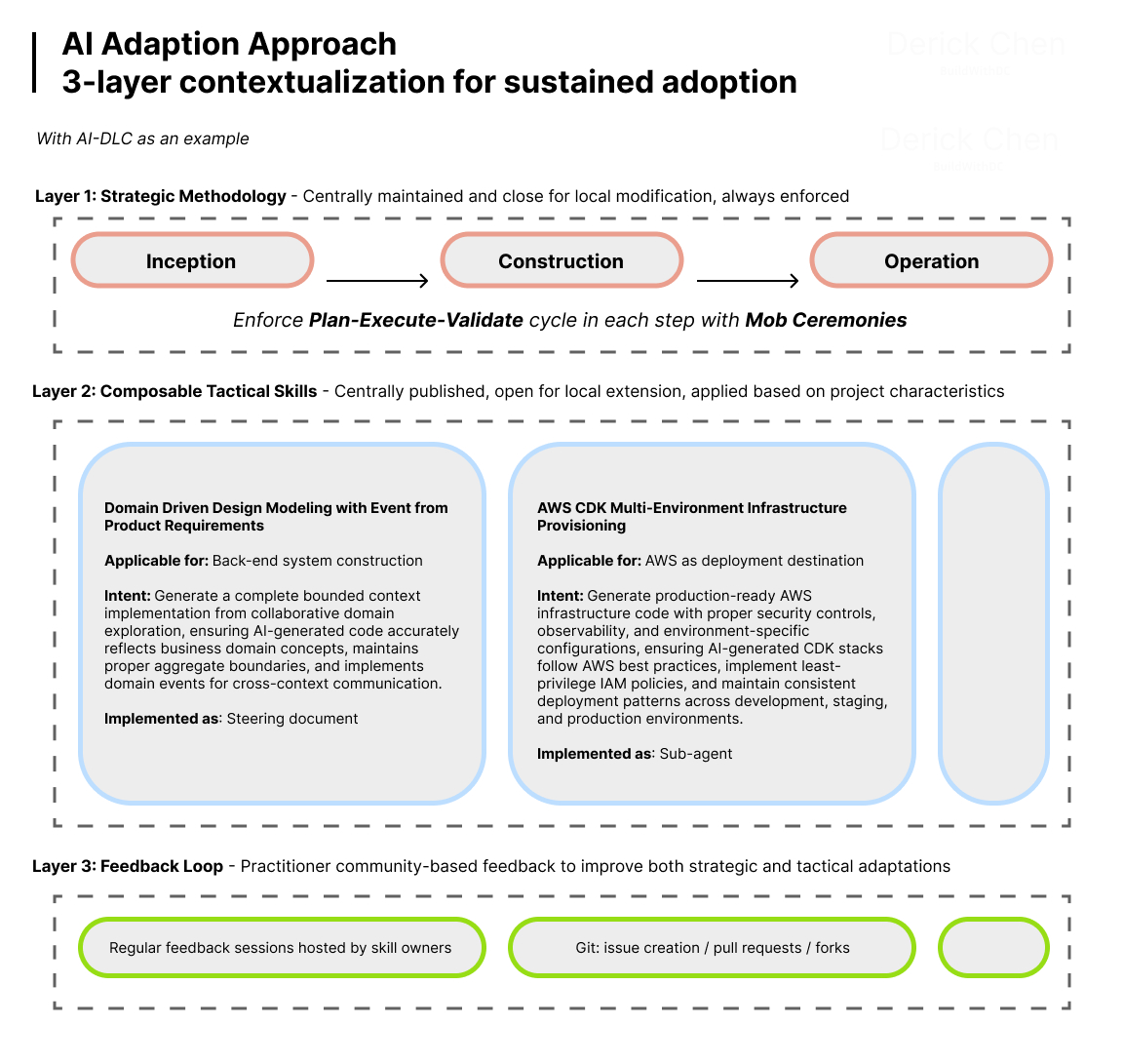

For AI adaptation in engineering organizations, there are three primary layers of tailoring and contextualization that must be established to maximize the effects of generalized AI models, tools, and skills. Each layer operates at a different timescale, serves a different purpose, and requires different ownership.

Layer 1: Strategic Methodologies Link to heading

Strategic methodologies are the orchestration layer. They coordinate multi-step, overarching processes that move teams from business intent to production software. Execution of a strategic methodology is typically measured in hours.

These define the “what” and “when” — the sequence of activities, decision gates, validation checkpoints, and handoff points that structure how work flows through the organization. AI-DLC’s three-phase structure — Inception, Construction, Operation — is an example of a strategic methodology.

Strategic methodologies are owned and maintained at the organizational or programme level. They’re analogous to a framework’s core architecture: stable, well-documented, and rarely changed. When they do change, it’s through deliberate evolution informed by feedback from practitioners — not ad hoc modification by individual teams.

The key characteristic: strategic methodologies are generalized. They work across tech stacks, across domains, across team compositions. They provide the skeleton that everything else hangs on.

Layer 2: Tactical Skills Link to heading

Tactical skills are domain-specific or technology-specific workflows that solve concrete integration challenges across different platforms and tools. Execution time is typically measured in minutes.

These define the “how” — the specific techniques and tool integrations for executing within the strategic framework. A spec-driven code generation workflow for a particular tech stack is a tactical skill. The Plan-Execute-Validate cycle that teams apply within each phase in AI-DLC is a tactical skill. A domain-specific testing strategy is a tactical skill. An infrastructure provisioning automation for a specific cloud platform is a tactical skill.

Tactical skills are owned by domain experts or platform teams and published for wider consumption. They’re analogous to plugins or extensions: swappable, composable, and specialized. A team working on a React frontend uses different tactical skills than a team building data pipelines, but both operate within the same strategic methodology.

This is where the open/closed principle comes alive. The strategic methodology doesn’t change when a team adopts a new tactical skill. The skill extends the methodology’s capabilities without modifying its core process. Teams get the customization they need without fragmenting the organizational approach.

Layer 3: Feedback and Observability Loops Link to heading

The third layer is what makes the first two sustainable. Without feedback and observability loops, strategic methodologies calcify and tactical skills become tribal knowledge.

This layer establishes mechanisms for reporting issues, measuring effectiveness, and iterating on both strategic methodologies and tactical skills. It includes clear ownership and maintainers for published workflows — someone is responsible for enhancements, just like someone is responsible for maintaining a published library or service.

It also includes metrics and evaluation criteria that allow comparing practices across teams. When Team A’s tactical skill for API testing produces measurably better outcomes than Team B’s approach, the observability layer surfaces that signal. Without it, both teams continue in isolation, never learning from each other.

Think of this as monitoring and alerting for your engineering practices. You can’t improve what you can’t observe. And in the same way that production systems need observability to operate reliably, organizational practices need feedback loops to evolve effectively.

The feedback layer also creates channels for practitioners to surface what’s working and what isn’t — closing the loop between bottom-up experimentation and top-down structure. Innovation still happens at the edges. But now there’s a mechanism to capture it, evaluate it, and scale it.

From Principles to Practice Link to heading

The three-layer model is a framework, not a mandate. You don’t build all three layers simultaneously. Here’s how I’ve seen organizations approach this effectively.

Start with Layer 1. Establish or adopt a strategic methodology that gives teams a shared language and process skeleton. This doesn’t need to be invented from scratch — AI-DLC is one option, and there are others emerging. The point is to have a common framework that defines how work flows from intent to production, with clear decision gates and validation checkpoints. Without this, everything else is built on sand.

Let Layer 2 emerge from practice. As teams work within the strategic framework, they naturally develop tactical skills — workflows, automations, and techniques specific to their domain and tech stack. The key is publishing and sharing these, not letting them stay as tribal knowledge locked in one team’s heads. Create a mechanism for teams to contribute their tactical skills to a shared repository. Make them discoverable. Make them composable. This is where the Taxi Team structure and mob ceremonies become valuable — they create natural moments for knowledge to surface and transfer.

Layer 3 is what makes it sustainable. Without feedback loops and clear ownership, the first two layers stagnate. Published workflows need maintainers, just like published software needs maintainers. Establish who owns each strategic methodology and each tactical skill. Define how practitioners report issues, suggest improvements, and contribute enhancements. Build the metrics that let you compare effectiveness across teams.

The open/closed principle threads through all of this. Teams can’t unilaterally change the strategic methodology’s core process — that’s closed for modification, evolved through deliberate governance. But they can build new tactical skills, adapt existing ones to their context, and contribute feedback that informs the methodology’s evolution — that’s open for extension.

Start simple and evolve complexity as patterns emerge. Don’t over-engineer the framework before you have practitioners using it. Like building proper foundations before scaling, the initial investment in structure pays compounding returns as the organization grows its AI capabilities.

Engineer Your Engineering Link to heading

Code generation is solved. The engineering challenge has moved up the stack — to processes, roles, and organizational design. The question facing engineering leaders isn’t “should we use AI?” It’s “how do we adapt our entire development lifecycle so that AI capabilities compound across the organization instead of fragmenting into a hundred isolated experiments?”

The bottom-up trap is real and tempting. Organic experimentation feels like progress. But without structure to consolidate learning, it produces local maximums, redundant reinventions, and Shadow AI that undermines organizational coherence.

The answer isn’t top-down control. It’s structured extensibility — a layered approach where strategic methodologies provide the stable core, tactical skills provide the specialized extensions, and feedback loops ensure continuous improvement with clear ownership.

The organizations that treat their engineering practices with the same architectural rigor they apply to their software systems — closed for modification at the core, open for extension at the edges — will define the next era of software delivery.

We’ve spent decades learning to engineer software. It’s time to engineer how we engineer.